Exploring World Transitions via 3D Occupancy World Model

Abstract

Occupancy world models have emerged as a pivotal paradigm for autonomous systems to perceive and predict the evolution of complex 3D environments. Recent advances leverage GPT-like autoregressive and diffusion-based architectures to forecast future occupancy from historical observations. Despite their success, these approaches struggle to capture heterogeneous motion correlations of surrounding scenes due to the entanglement of ego-motion and scene dynamics, leading to severe scene degradation in long-term predictions. We present \textit{OccTrans}, a novel 3D occupancy world transitions modeling framework that explicitly decouples ego-motion states from token-level dynamics. Our approach introduces a temporal warping module that applies ego-pose transformations to align tokens from previous frames into the coordinate of the current frame, effectively isolating vehicle motion. A dynamic world model then predicts residual token-level dynamics induced by moving objects and structural variations. This disentangled formulation mitigates cumulative errors, preserves long-term scene consistency, and improves prediction fidelity. Evaluations on the Occ3D-nuScenes benchmark demonstrate that OccTrans achieves substantial improvements over state-of-the-art methods while maintaining high computational efficiency. Moreover, OccTrans exhibits strong capability in generating coherent long-horizon 3D occupancy sequences.

Method

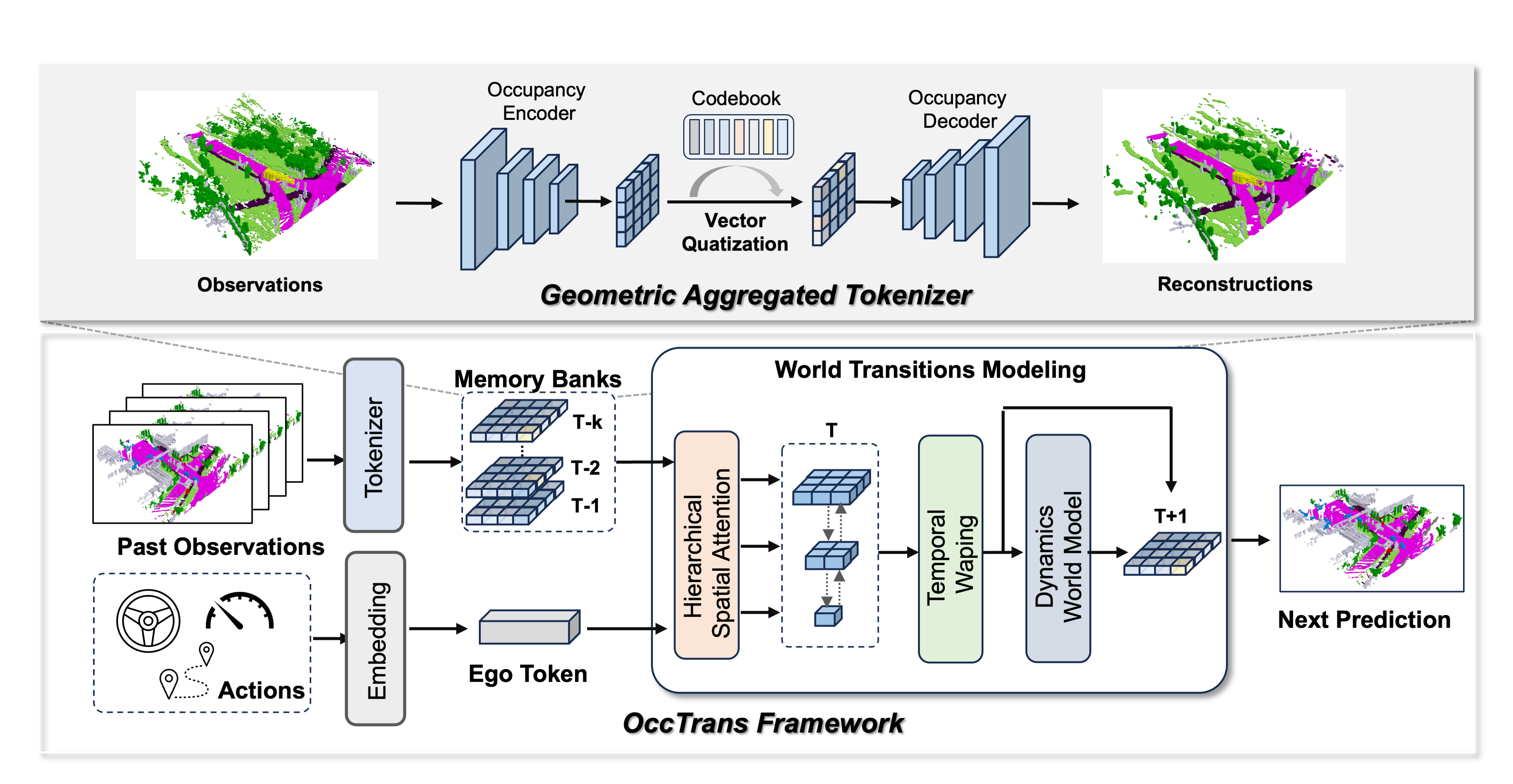

The overview of our proposed OccTrans, which includes a geometric aggregated tokenizer and a world transitions modeling module. The tokenizer, with an encoder-decoder architecture, discretizes the scene into tokens on the BEV plane. The world transition modeling module predicts future tokens from past ones by modeling temporal warping and dynamics, and comprises three components: hierarchical spatial attention, temporal warping, and a dynamic world model.

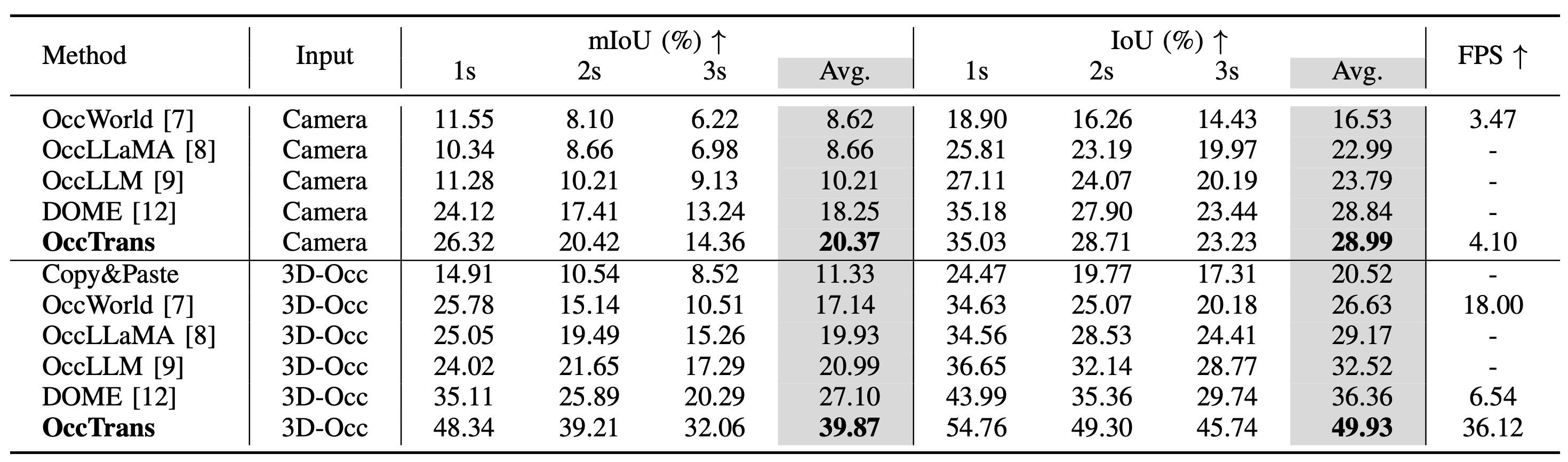

Results

The performance of 4D occupancy forecasting. ``Avg.'' represents the average performance across 1s, 2s, and 3s timeframes. The best results are highlighted in bold.

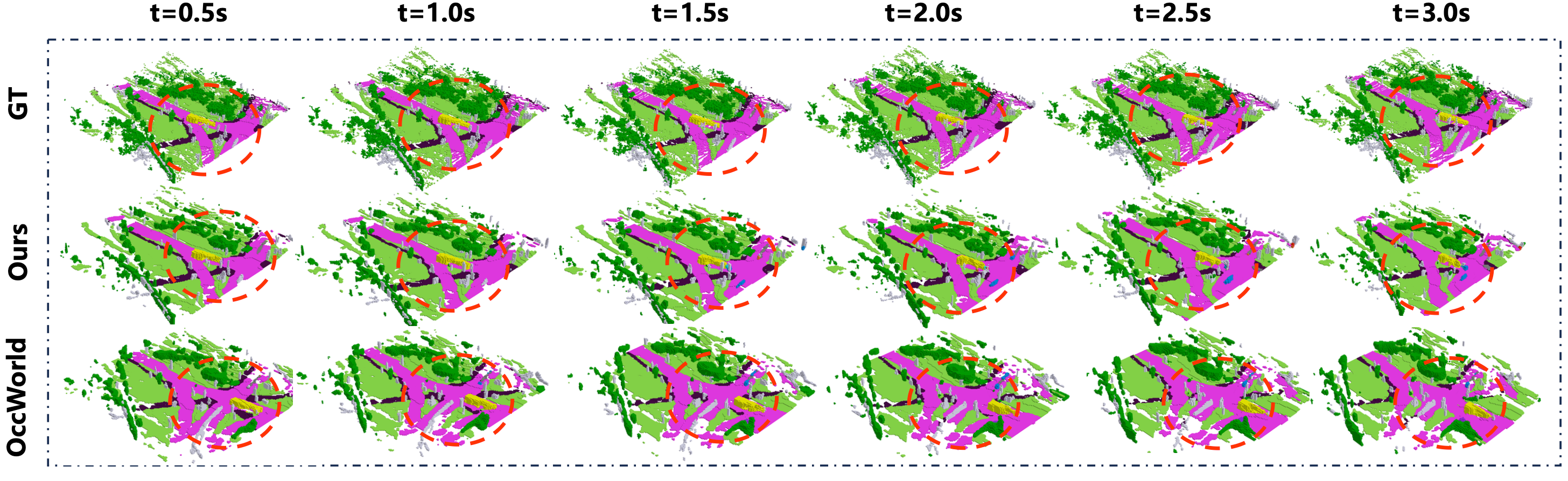

Visualizations of forecasting and planning results from 0 to 12 seconds for groundtruth, OccWorld, and our OccTrans. The regions highlighted by red circles show clear differences between OccWorld and OccTrans, which are more apparent when zoomed in.

BibTeX

@article{wang2026occ,

title={Exploring World Transitions via 3D Occupancy World Model},

author={Guoqing Wang and Pin Tang and Xiangxuan Ren and Chao Ma},

journal={IEEE International Conference on Multimedia and Expo},

year={2026},

}